Note

This is the website of the course taught in Fall 2024. If you are looking for the website of the course taught in Fall 2025, please click here.

Graduate-level course at ETH Zurich in Autumn Semester 2024

Lecturer: Christos Sakaridis.6 ECTS. Class size limited to 90 students.

ETH Course Catalogue

Lecture Team

- All

- General

- Project 1

- Project 2

- Website & Forum

Lectures

Date

Time

Room

Slides

Video

Topic

for Autonomous Cars

for Autonomous Cars (continued)

Vehicle-to-Vehicle Communication

Practical Sessions

Date

Time

Room

Slides

Video

Topic

Forum

We use Piazza as forum to discuss within students and with teachers. You can post private messages, anonymous messages are not allowed.

Abstract

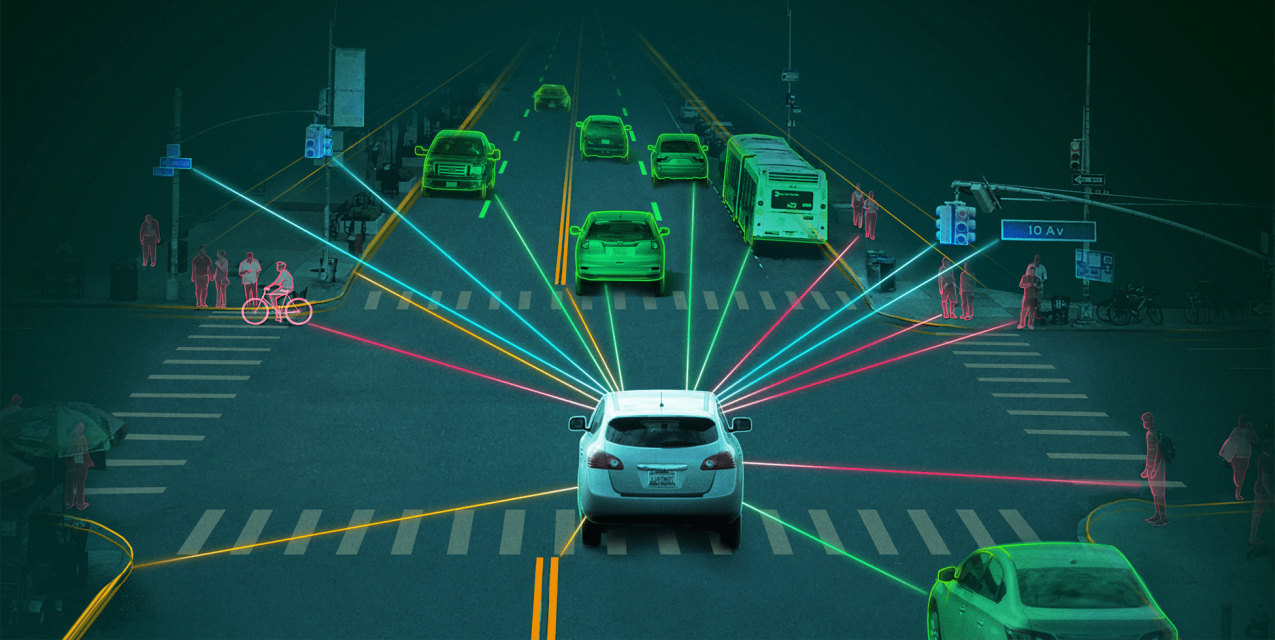

This course introduces the core computer vision techniques and algorithms that autonomous cars use

to perceive the semantics and geometry of their driving environment, localize themselves in it,

and predict its dynamic evolution. Emphasis is placed on techniques tailored for real-world settings,

such as multi-modal fusion, domain-adaptive and outlier-aware architectures, and multi-agent methods.

Objective

Students will learn about the fundamentals of autonomous cars and of the computer vision models and methods these cars use to analyze

their environment and navigate themselves in it. Students will be presented with state-of-the-art representations

and algorithms for semantic, geometric and temporal visual reasoning in automated driving and will gain hands-on experience

in developing computer vision algorithms and architectures for solving such tasks.

After completing this course, students will be able to:

- understand the operating principles of visual sensors in autonomous cars,

- differentiate between the core architectural paradigms and components of modern visual perception models and describe their logic and the role of their parameters,

- systematically categorize the main visual tasks related to automated driving and understand the primary representations and algorithms which are used for solving them,

- critically analyze and evaluate current research in the area of computer vision for autonomous cars,

- practically reproduce state-of-the-art computer vision methods in automated driving,

- independently develop new models for visual perception.

Content

The content of the lectures consists in the following topics:

- Fundamentals

- Fundamentals of autonomous cars and their visual sensors

- Fundamental computer vision architectures and algorithms for autonomous cars

- Semantic perception

- Semantic segmentation

- Object detection

- Instance segmentation and panoptic segmentation

- Geometric perception and localization

- Depth estimation

- 3D reconstruction

- Visual localization

- Unimodal visual/lidar 3D object detection

- Robust perception: multi-modal, multi-domain and multi-agent methods

- Multi-modal 2D and 3D object detection

- Visual grounding and verbo-visual fusion

- Domain-adaptive and outlier-aware semantic perception

- Vehicle-to-vehicle communication for perception

- Temporal perception

- Multiple object tracking

- Motion prediction

Projects

The practical projects involve implementing complex computer vision architectures and algorithms and applying them to real-world, multi-modal driving datasets. In particular, students will develop models and algorithms for:

- Semantic segmentation and depth estimation,

- 3D object detection using LiDARs.

Prerequisites

Students are expected to have a solid basic knowledge of linear algebra, multivariate calculus, and probability theory, and a basic background in computer vision and machine learning. All practical projects will require solid background in programming and will be based on Python and libraries of it such as PyTorch, scikit-learn and scikit-image.

Exam

Examiners:

Christos Sakaridis

A session examination is offered. The mode of the exam is written and its duration is 120 minutes.

The language of examination is English. The performance assessment is only offered in the session after

the course unit. Repetition is only possible after re-enrolling.

The final grade will be calculated from the session examination grade and the overall projects

grade, with each of the two elements weighing 50%. The projects are an integral part of the course,

they are group-based and their completion is compulsory. Receiving a failing overall projects grade results

in a failing final grade for the course. Students who do not pass the projects are required to de-register from the exam.

Written aids for the final exam: one A4 sheet of paper and simple non-programmable calculator.

A short mock exam with sample, representative multiple-choice and true-false questions is available below, without and with solutions, for the purpose of practicing. The volume of this mock exam is shorter than (and not representative of) that of the actual exam. Questions on the solutions of the mock exam will be discussed in the lecture of 06.12.2024.